Alpha Lab

This is a place for tinkering. We build prototypes to test out novel ideas and connect our existing tools with cutting edge tech.Learn more

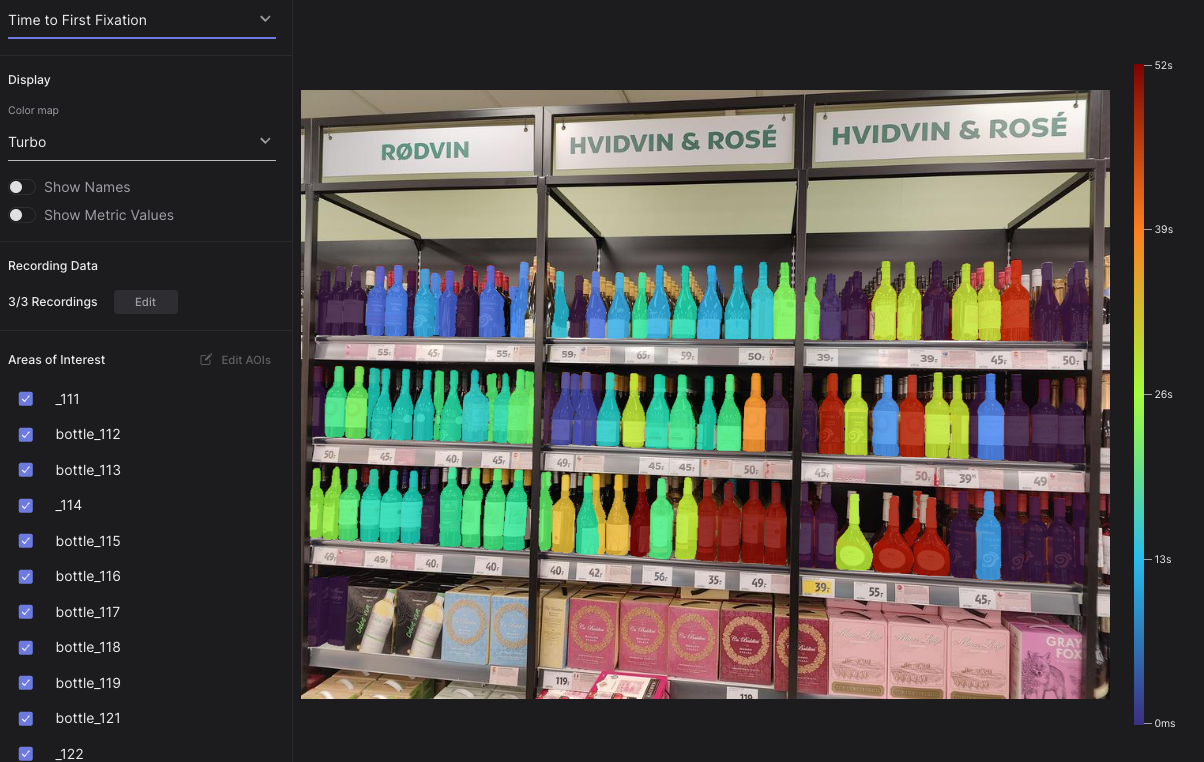

Explore AOI Data Across Measurements

Use this interactive tool to explore your how your AOI metrics change over time, between conditions, wearer groups, or even in different contexts.

A Guide to Multiperson Eye Tracking

Learn how to control multiple devices simultaneously and send synchronized event markers to align data for multiperson studies.

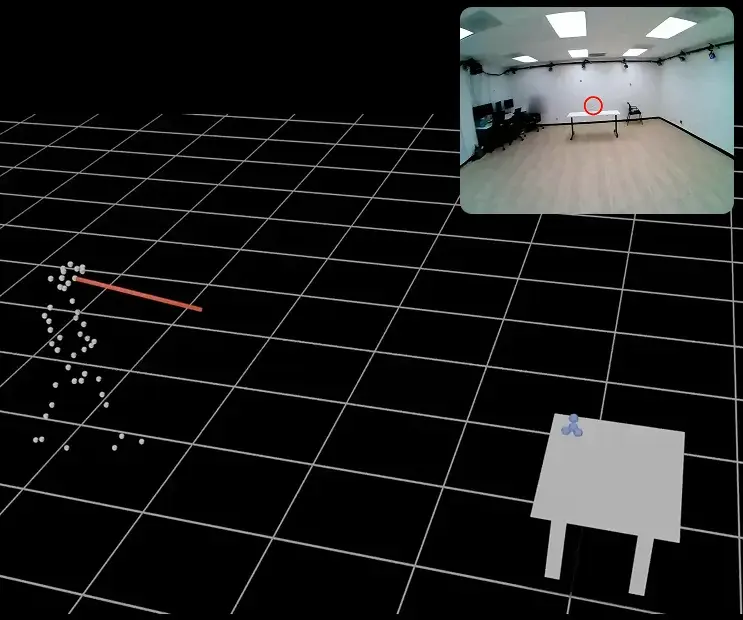

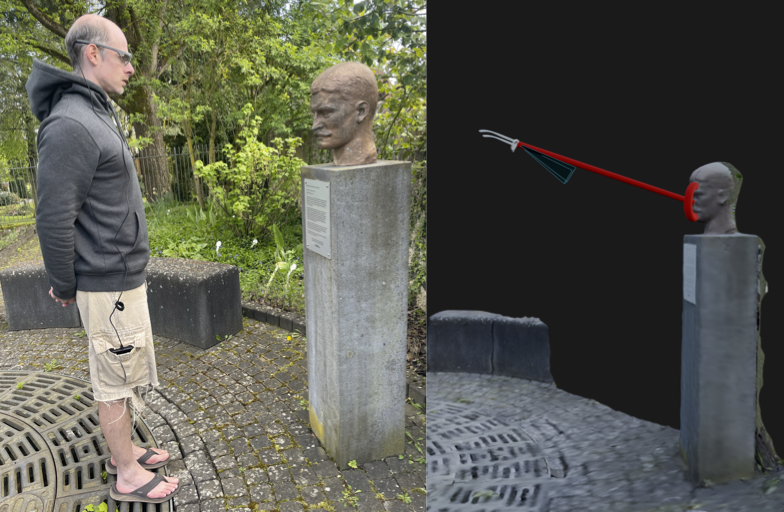

Motion Capture Integration with Neon

Spatially integrate Neon’s 3D gaze data with any marker-based motion capture system. Learn how to map gaze directly into your mocap environment using a simple, one-time calibration step.

Dynamic AOI Tracking with Neon and SAM2 Segmentation

Segment and map gaze onto any moving area of interest using Neon and SAM2

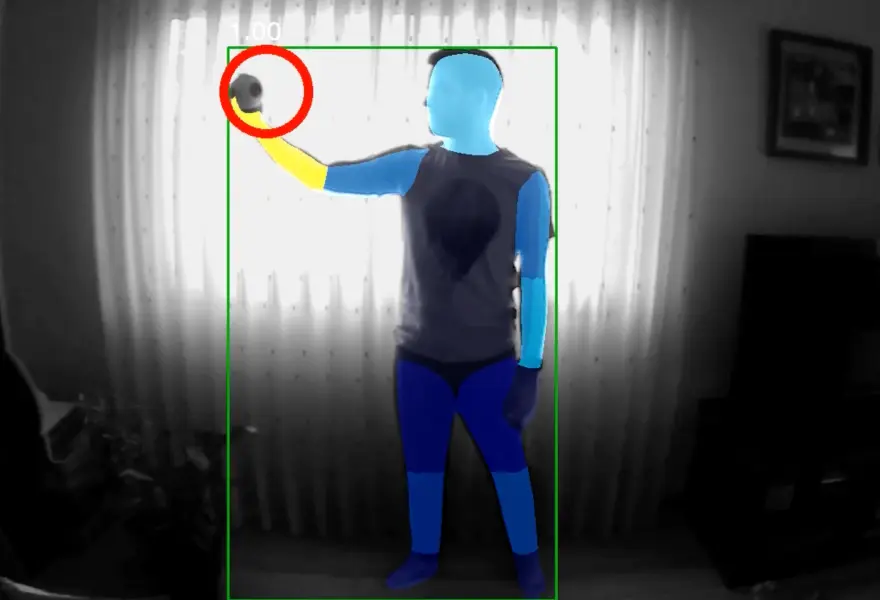

Map Gaze Onto Anything

Combine gaze with object and pose recognition in minutes. Learn how to augment your eye tracking data using powerful, open-source computer vision models, in real time or after the fact.

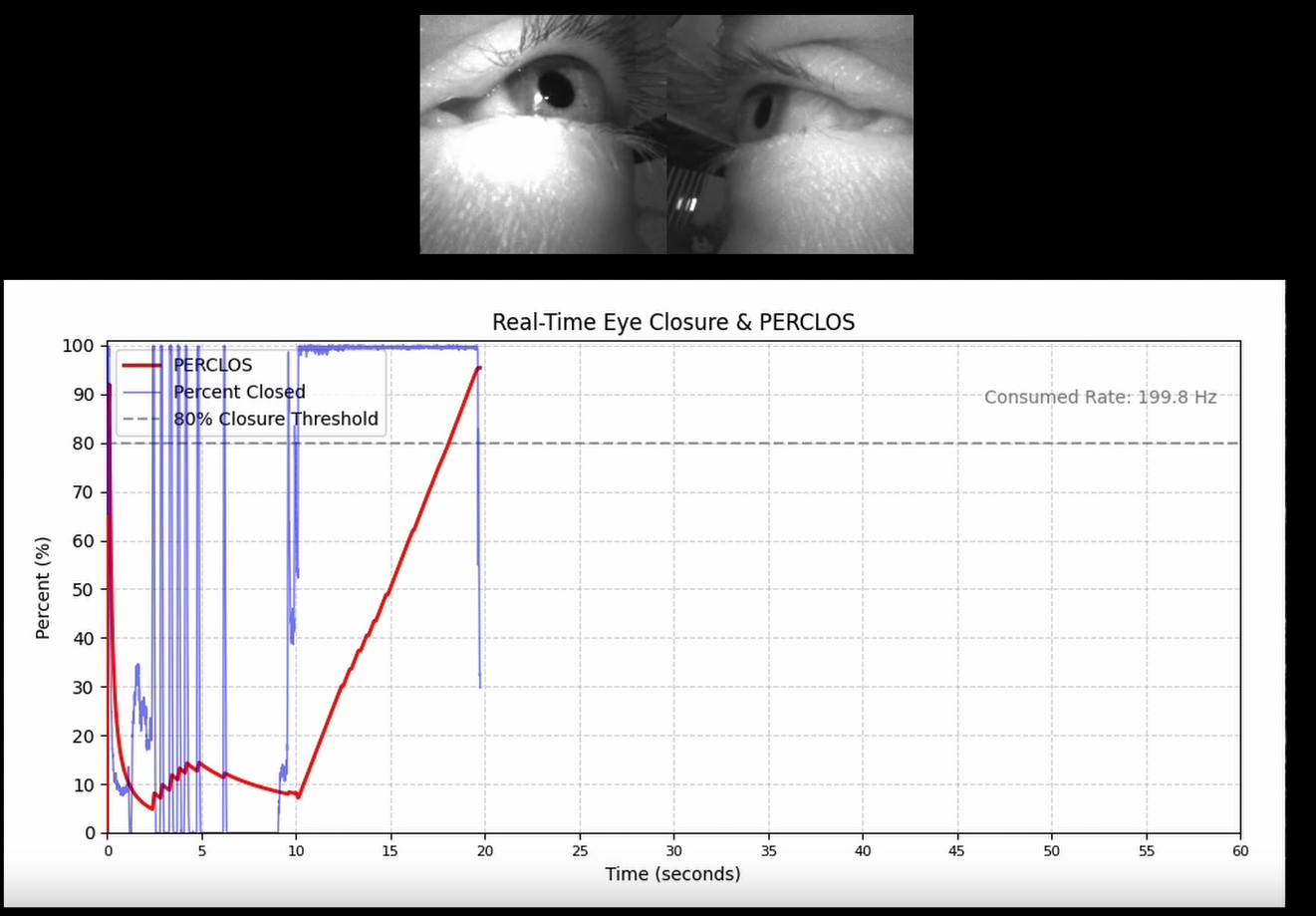

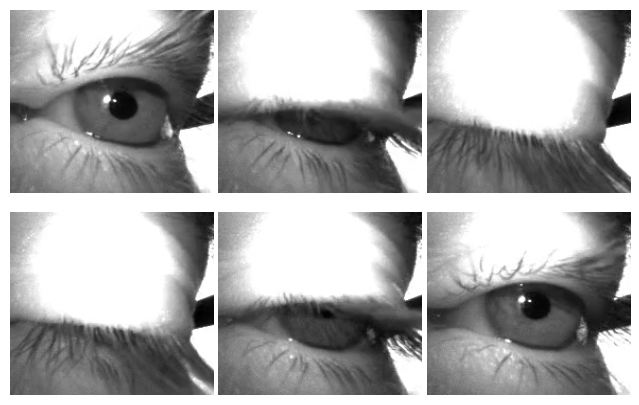

Real-Time Eyelid Dynamics with PERCLOS and Neon

Calculate PERCLOS (percentage of eye closure) in real time using Neon and its Real-Time API.

Where did I see that? Eye Tracking & GPS

Use a GPS, like the one in Neon's Companion Device, to record synchronized location, eye, and head movement data. Visualize it on a map and click to jump there in your recording!

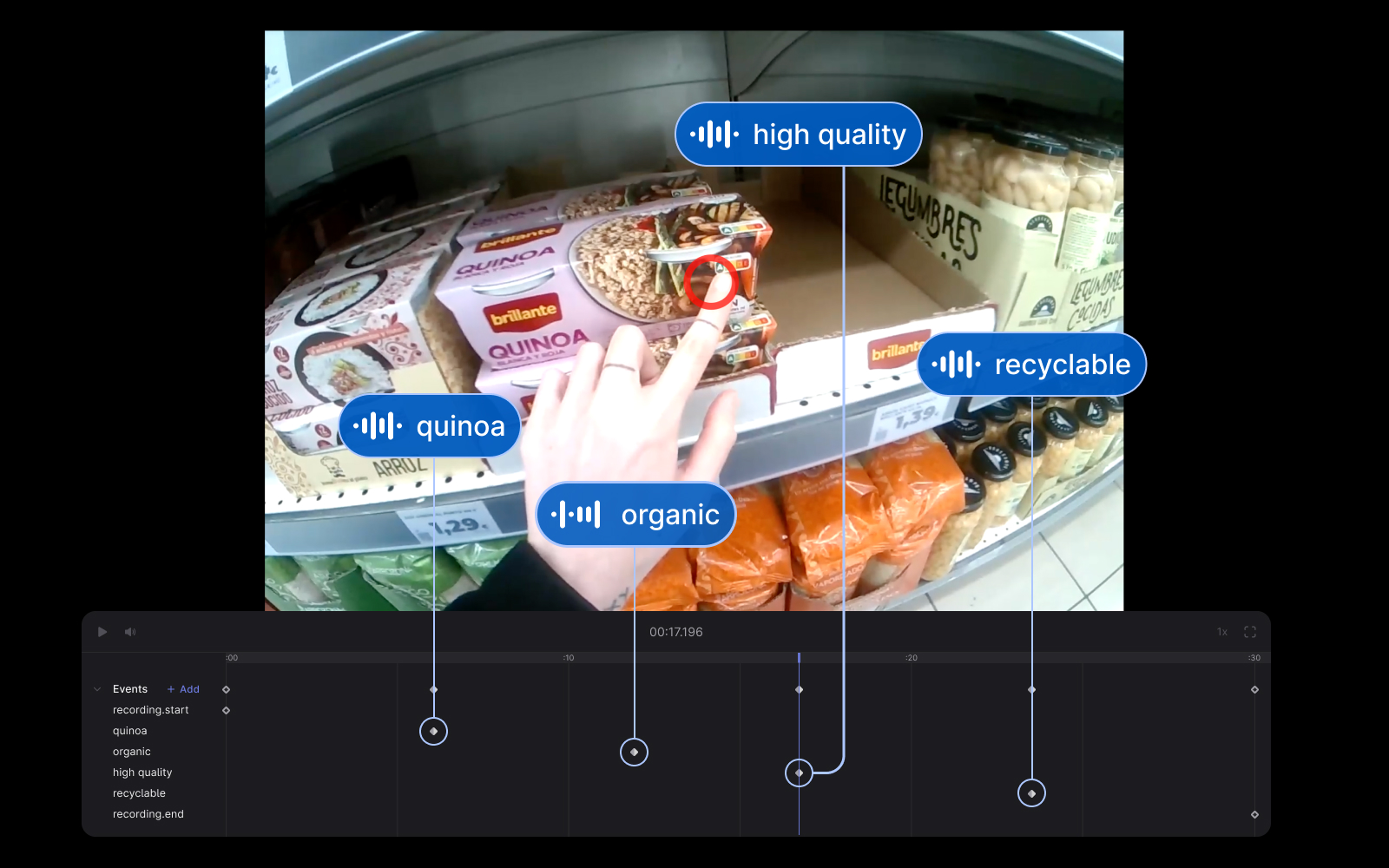

Audio-Based Event Detection With Pupil Cloud and OpenAI's Whisper

Automatically annotate important events in your Pupil Cloud recordings using Neon's audio capture and OpenAI's Whisper.

Map Gaze Onto an Alternative Egocentric Video

Automatically map gaze from Neon scene camera to an alternative concurrent egocentric video.

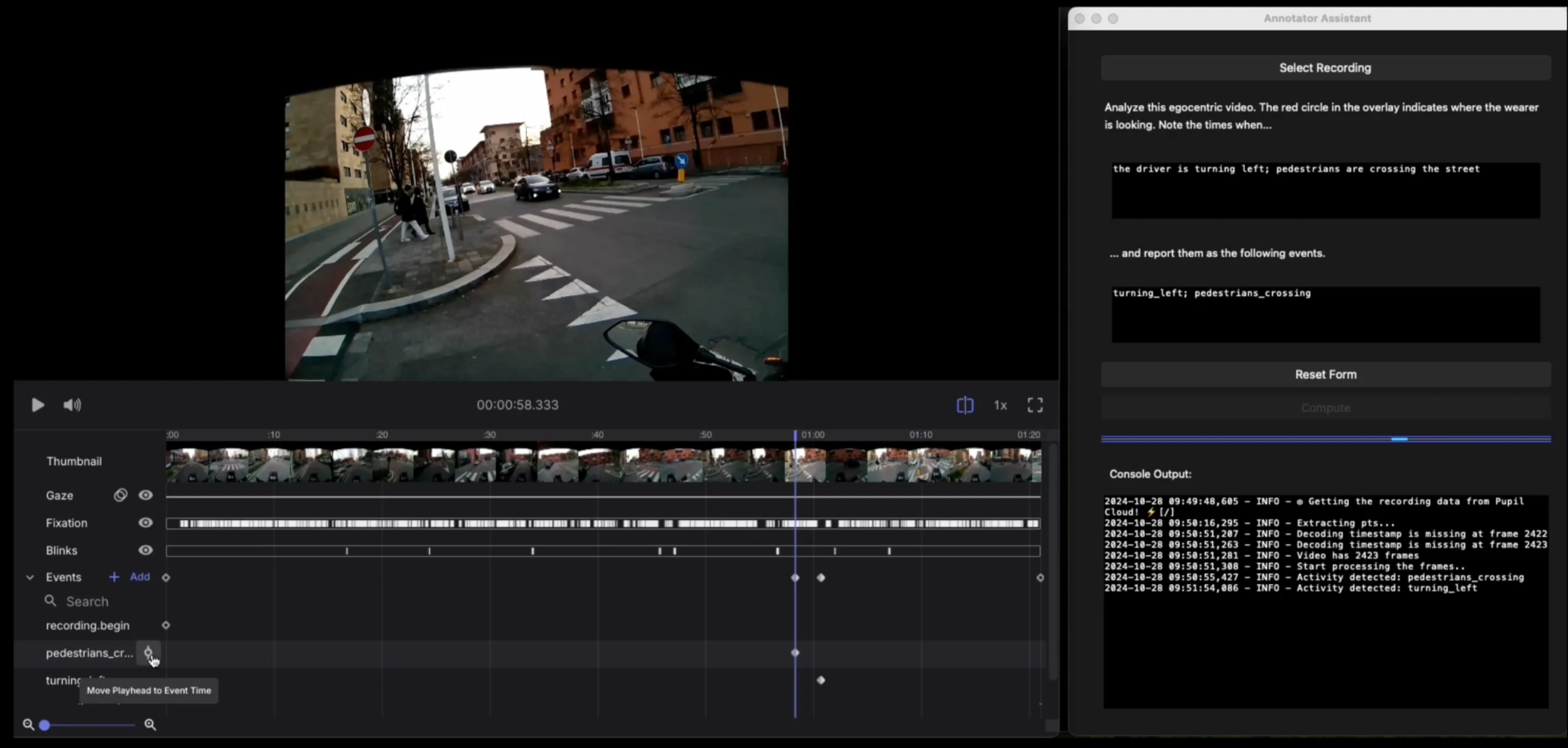

Automate Event Annotations With Pupil Cloud and GPT

Automatically annotate important activities and events in your Pupil Cloud recordings with GPT-4o.

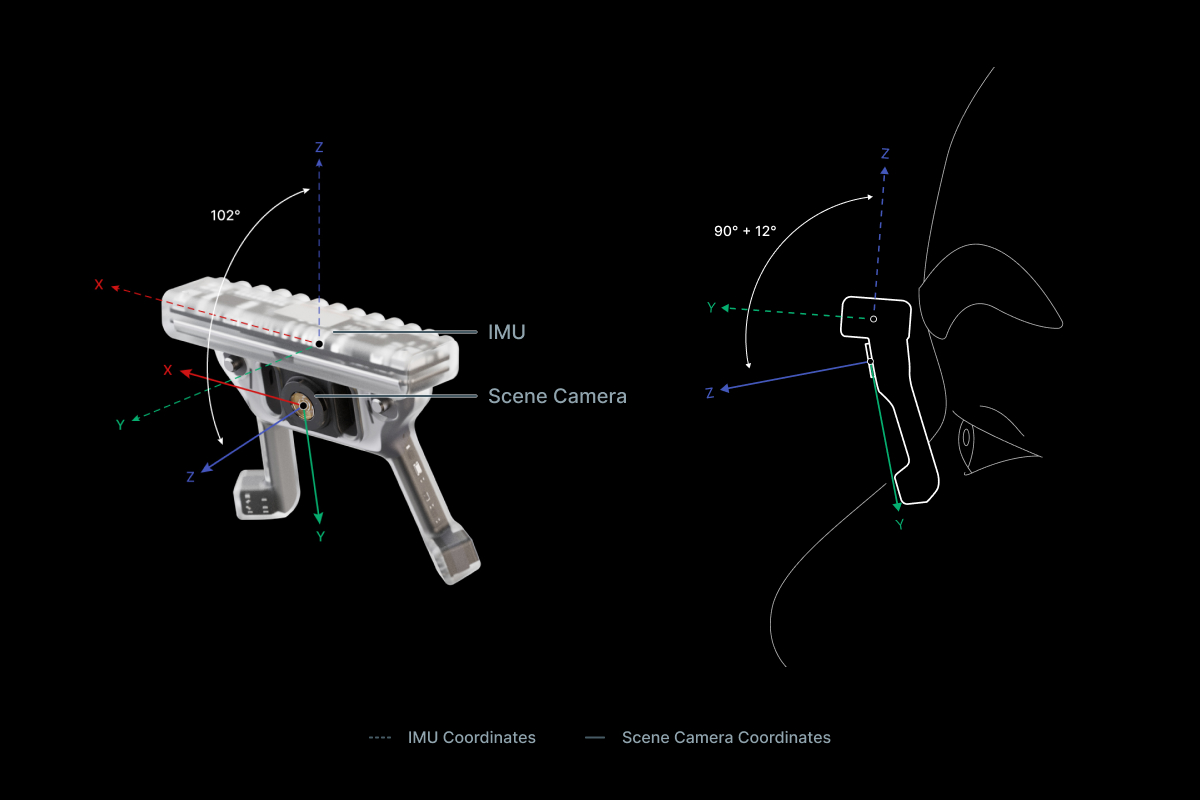

IMU Transformations

Transform IMU data into different representations and coordinate systems with these code snippets.

Map Gaze Into a User-Supplied 3D Model

Map gaze, head pose, and observer position into a 3D coordinate system of your choice using our Tag Aligner tool.

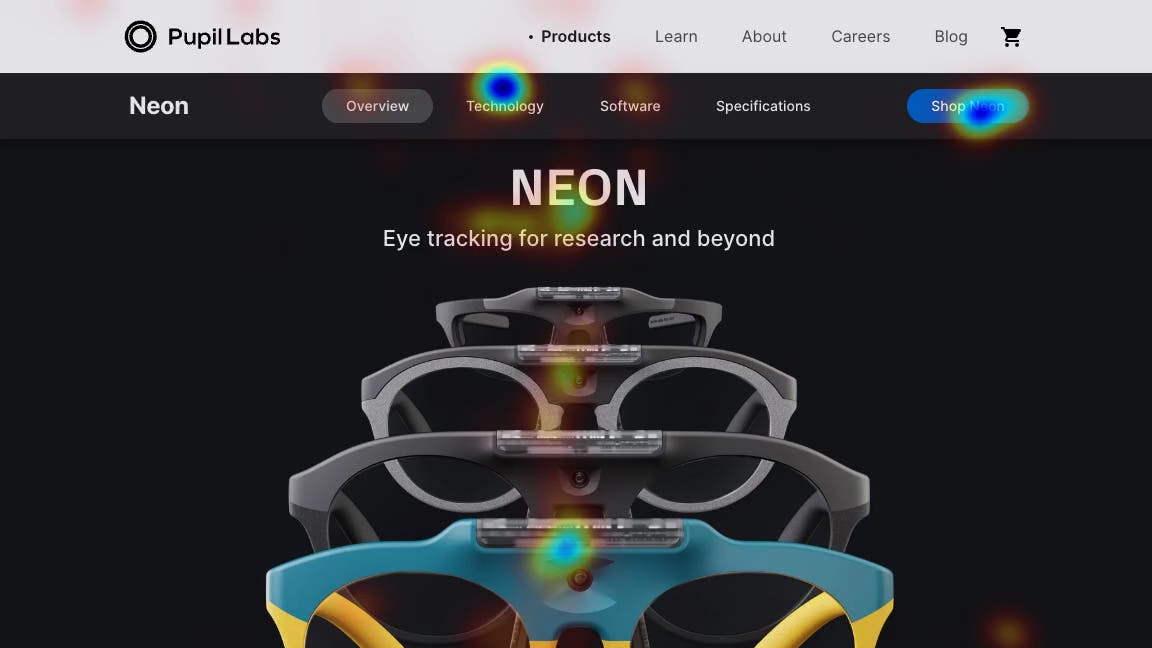

Map Gaze Onto Website AOIs

Define areas of interest on a website and map gaze onto them using our Web-AOI tool.

Map Gaze Onto Facial Landmarks

Map gaze onto facial landmarks using Pupil Cloud's Face Mapper exported data.

Automate AOI Masking in Pupil Cloud

Extend the capabilities of Pupil Cloud's AOI tool by automatically segmenting and drawing masks using natural language.

Build an AI Vision Assistant

Experiment with assistive scene understanding applications using GPT-4V (an extension of GPT4 that can interpret images) and Pupil Labs eye tracking.

Detect Eye Blinks With Neon

Apply Pupil Labs blink detection algorithm to Neon recordings programmatically, offline or in real-time using Pupil Labs real-time Python API.

Build Gaze-Contingent Assistive Applications

Build your very own gaze-contingent assistive applications (such as a gaze-controlled input device) using Neon eye tracking and our real-time screen gaze package.

Map Gaze Onto a 3D Model of an Environment

Map gaze onto a 3D model of an environment and visualise gaze patterns as 3D heatmaps using Pupil Cloud's Reference Image Mapper and Nerfstudio.

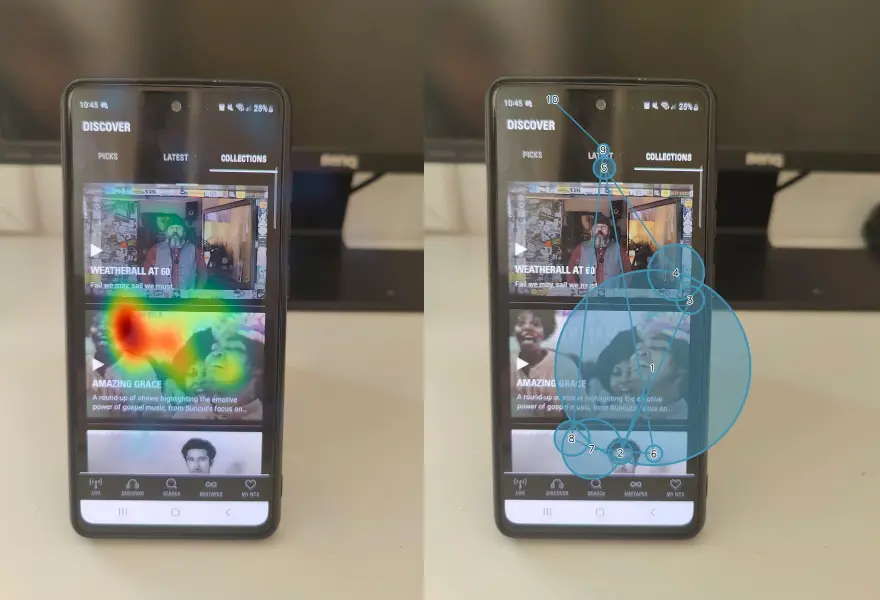

Uncover Gaze Behaviour on Phones

Capture and analyze users' viewing behaviour when focusing on small icons and features of mobile applications using Neon eye tracking alongside existing Cloud and Alpha Lab tools.

Generate Scanpath Visualisations

Generate both static and dynamic scanpath visualisations using exported data from Pupil Cloud's Reference Image Mapper or Manual Mapper.

Map Gaze Throughout an Entire Room

Use Pupil Cloud's Reference Image Mapper to Map gaze onto multiple areas of an entire room as participants freely navigate around it.

Map Gaze Onto Body Parts

Map gaze behaviour on body parts that appear in the scene video of Neon or Pupil Invisible eye tracking footage.

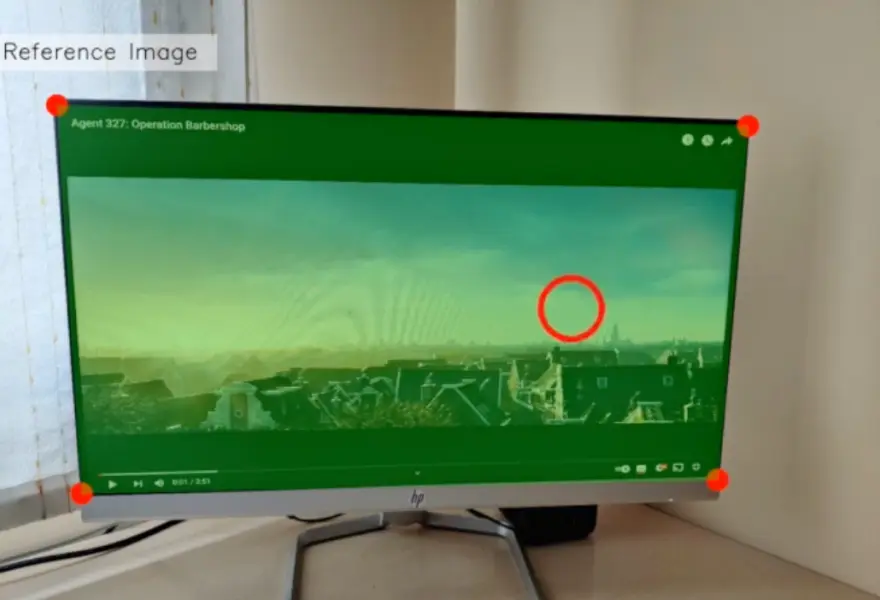

Map Gaze Onto Dynamic Screen Content

Map and visualise gaze onto a screen with dynamic content, e.g. a video, web browsing, or other, using Pupil Cloud's Reference Image Mapper and screen recording software.

Use Neon with Pupil Capture

Use your Neon module as if you were using Pupil Core. Connect it to a laptop, and record using Pupil Capture.

Undistort Video and Gaze Data

Learn how to undistort the scene camera distortions and apply it to gaze positions.