Ecosystem Overview

The Pupil Invisible ecosystem contains a range of tools that support you during data collection and data analysis. This overview introduces all the key components so you can become familiar with all tools at your disposal.

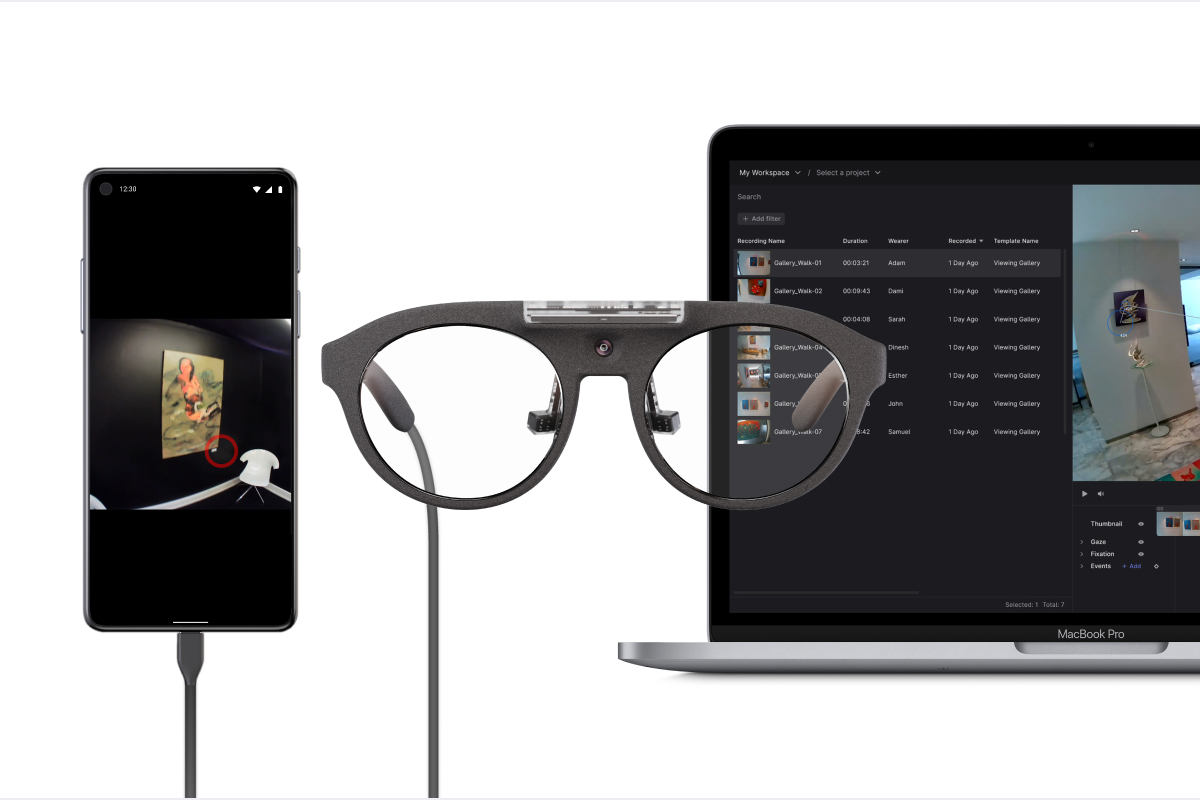

Pupil Invisible Companion app

You should have already used the Pupil Invisible Companion app to make your first recording. This app is the core of every Pupil Invisible data collection.

When your Pupil Invisible is connected to the Companion device, it supplies it with power and the app calculates a real-time gaze signal. When making a recording, all generated data is saved on the Companion device.

The app automatically saves UTC timestamps for every generated data sample. This allows you to easily sync your data with other 3rd party sensors, or to sync recordings from multiple subjects that have been made in parallel.

Pupil Cloud

Pupil Cloud is our web-based storage and analysis platform located at cloud.pupil-labs.com. It is the recommended tool for handling your Pupil Invisible recordings. It makes it easy to store all your data securely in one place and it offers a variety of options for analysis.

Once a recording is uploaded to Pupil Cloud the processing pipeline begins adding several additional low-level data streams to it - including the 200 Hz gaze signal and fixation data. Some of this data (e.g. the 200 Hz gaze signal) is not available outside of Pupil Cloud.

From here, you can either download the raw data in a convenient format or use some of the available analysis algorithms to extract additional information from the data. For example, use the Face Mapper to automatically track when subjects are looking at faces. Or use the Reference Image Mapper to track when subjects are looking at objects of interest represented by a reference image.

We have a strict privacy policy that ensures your recording data is accessible only by you and those you explicitly grant access to. Pupil Labs will never access your recording data unless you explicitly instruct us to.

If Cloud upload is enabled in the Pupil Invisible Companion app, then recordings will be uploaded automatically to Pupil Cloud. No additional effort is required for data logistics.

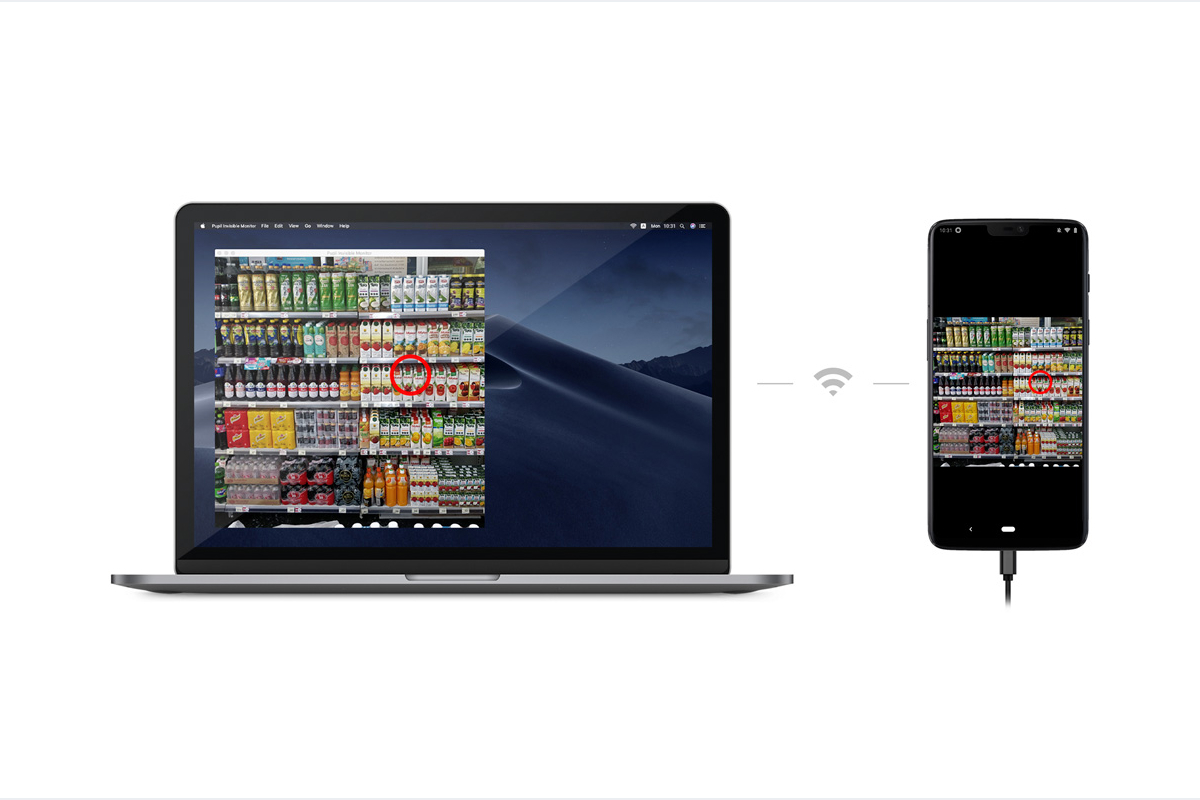

Pupil Invisible Monitor

All data generated by Pupil Invisible can be monitored in real-time using the Pupil Invisible Monitor app. To access the app simply visit pi.local:8080 in your browser while being connected to the same WiFi network as the Companion device.

The real-time streaming app enables you to monitor and control a recording session remotely without having to directly interact with the Companion device carried by the subject. You can view the scene video and gaze data remotely in your browser. You can also start and stop recordings and annotate important moments in time with events. All data will be saved with the recording on the Companion device.

Real-Time API

All data generated by Pupil Invisible is accessible to developers in real-time via our Real-Time API. Similar to the Pupil Invisible Monitor app, it allows you to stream data, remotely start/stop recordings and save events. The only requirement is that the Companion device and the computer using the API are connected to the same WiFi network.

This enables you to e.g. implement Human Computer Interaction (HCI) applications with Pupil Invisible or to streamline your experiments by remotely controlling your devices and saving events automatically.

Check-out out our real-time API tutorial to learn more!

For a concrete usage example, see Track your Experiment Progress using Events!